EU AI Act for US Startups: The Deployer vs Provider Trap

· UpdatedUpdate — 11 May 2026: The AI Act Omnibus deal reached at the May trilogue moves the 2 August 2026 deadline for Annex III high-risk obligations to 2 December 2027, pending formal adoption and publication in the Official Journal. Article 50 transparency moves to 2 November 2026. See: EU AI Act High-Risk Deadline Delayed to December 2027.

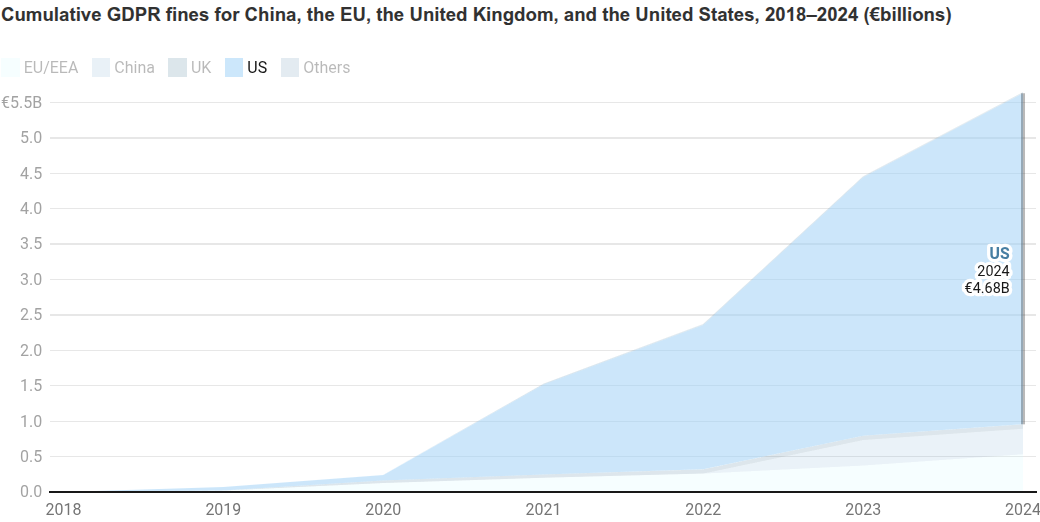

Most US founders assume the EU AI Act is an EU problem. They’re wrong, for the same reason they were wrong about GDPR. EU authorities have issued €4.68 billion in GDPR fines to US companies — 83% of all fines issued — and the AI Act uses the same enforcement model.

If you’ve integrated OpenAI, Claude, or any other AI API into your product and shipped it to users under your own brand, under Article 3(3) of the EU AI Act you’re the provider of that AI system. OpenAI isn’t. Anthropic isn’t. You are. If you run a SaaS with a GPT-4-powered customer support bot and you have any EU users, you are the provider of that AI system. Not OpenAI. You.

Article 3(3) defines a provider as anyone who “develops an AI system… and places it on the market or puts the AI system into service under its own name or trademark.” Recital 97 makes the API scenario explicit in that general-purpose AI models “require the addition of further components, such as for example a user interface, to become AI systems.” The moment you wrap the API in your product and ship it, you’ve created an AI system, and you’re its provider.

What that means in practice depends heavily on your system’s risk classification. The obligations range from manageable (transparency disclosures for a customer chatbot) to substantial (full conformity assessment for a hiring tool). But the provider/deployer classification itself matters. Most startups assume they’re the deployer. They’re not.

Who the Act applies to

Article 2 defines scope. The relevant clauses for US companies:

Article 2(1)(a): Providers who place AI systems on the EU market, “regardless of whether those providers are established in the EU or in a third country.”

Article 2(1)(c): Providers and deployers established outside the EU “where the output of the AI system is used in the EU.”

If you have EU users and your product uses AI in any form that produces outputs those users interact with, the Act applies to you. Where your company is incorporated, where your servers are, where your team sits make no difference. None of that changes your responsibilities under the Act.

This is the same extraterritorial logic GDPR used. Many US companies learned that lesson late.

The provider trap

The Act distinguishes between two roles:

Provider (Article 3(3)): A natural or legal person who develops an AI system and places it on the market or puts it into service under their own name or trademark. Providers have the heaviest compliance obligations.

Deployer (Article 3(4)): A natural or legal person who uses an AI system under their authority for their own purposes. Deployers have lighter obligations, principally the requirements in Article 26.

Here’s the trap most startups fall into: they assume that because they’re using OpenAI’s API, they’re a deployer. They think the provider is OpenAI.

That’s not how the Act works.

If you’ve built a product where you:

- Call the OpenAI or Anthropic API

- Integrated the AI into your product under your brand

- Put it in front of your users

Then you are the provider of that AI system under Article 3(3). The Act assigns provider status to whoever ships the resulting product, not whoever built the underlying model.

Most people treat “AI model” and “AI system” as interchangeable. The Act doesn’t. Recital 97 draws a precise distinction: AI models “do not constitute AI systems on their own” and “require the addition of further components, such as for example a user interface, to become AI systems.” GPT-4 or Claude are models. The product you built on top of them, with your UI, your application logic, your deployment, is the AI system. OpenAI developed the model. You developed the AI system.

OpenAI and Anthropic are providers of their foundational models, carrying obligations under Articles 51–56 (General-Purpose AI). But the customer-facing system, the thing your users actually use, is yours. Article 25(4) makes this explicit: where a GPAI model is integrated into an AI system, the provider of the AI system retains responsibility for compliance with the Act in respect of that system.

For high-risk systems (Article 6 and Annex III), provider obligations include the full stack:

- Technical documentation under Annex IV

- Risk management system under Article 9

- Data governance measures under Article 10

- Human oversight by design under Article 14

- Accuracy, robustness, cybersecurity under Article 15

- Conformity assessment before placing on the EU market (Article 43)

- EU Declaration of Conformity under Article 47

- Registration in the EU database under Article 71

- Post-market monitoring under Article 72

Deployers have a subset of these under Article 26. The next section covers why most startups aren’t building high-risk systems, and what actually applies to them.

Not everything is high-risk

Most startups building AI products are not building high-risk AI systems. The Act’s heaviest obligations (the full provider list above) apply specifically to high-risk AI systems, defined in Article 6 and Annex III.

High-risk categories include:

- AI used in hiring, workforce management, or employment decisions

- AI making decisions about access to credit, insurance, or essential services

- AI used in education to evaluate students

- AI used in law enforcement or border control

- AI in healthcare for diagnosis or treatment decisions

- Biometric identification systems

General-purpose AI assistants, customer service chatbots, content generation tools, coding assistants, and search features don’t fall into high-risk categories under the Act as written.

So what actually applies to most US startups?

Even if you’re not high-risk, the following obligations apply regardless of risk classification:

Article 4: AI literacy (in force since February 2025)

Providers must ensure that staff involved in developing, deploying, or overseeing AI systems have sufficient AI literacy (the Act’s phrase is “appropriate level of AI literacy”). Not technical expertise across the board. The people making decisions about your AI systems need to understand what those systems can and can’t do, and where the risks are. Document what training you’ve provided.

Article 5: Prohibited practices (in force since February 2025)

These prohibitions apply to every AI system, regardless of risk class:

- AI systems that manipulate users through subliminal techniques to cause harm

- AI that exploits vulnerabilities of specific groups (age, disability) to cause harm

- Social scoring systems for general purposes

- Most real-time remote biometric identification in public spaces

- Emotion recognition in workplaces or educational institutions (with narrow exceptions)

If your AI product does any of these things, it’s illegal in the EU now. Not in August 2026. Now.

Article 50: Transparency obligations

Article 50 applies to AI systems that interact with people, regardless of risk classification. If your product includes a chatbot or any AI system that generates synthetic content:

- Article 50(1): Users must be informed they’re interacting with an AI (applies to any system designed to interact with natural persons)

- Article 50(2): AI-generated content must be marked as machine-generated, labelled in a machine-readable format, with exceptions for obvious parody/satire or where disclosure is clearly understood from context

- Article 50(5): Deepfakes require explicit disclosure

These are not high-risk requirements. Article 50 applies to the vast majority of customer-facing AI products. If users interact with your AI and you haven’t disclosed that it’s AI, you’re already non-compliant.

The August 2026 deadline: what it actually means

The Act has a phased implementation schedule:

- February 2025: Prohibited practices (Article 5) applied

- August 2025: GPAI obligations applied (Article 52)

- August 2026: High-risk obligations apply in full. Penalties become enforceable.

August 2, 2026 is when the full regime kicks in.

Penalties under the Act:

- Prohibited practices violations: up to €35 million or 7% of global annual turnover

- Other violations (including high-risk obligations): up to €15 million or 3% of global annual turnover

- Providing incorrect information to authorities: up to €7.5 million or 1% of global annual turnover

These figures are the same for EU and non-EU companies.

What to actually do before August 2026

Step 1: Map your AI systems

List every AI system your product contains or integrates. Include anything that uses an AI API, embedded model, or AI-powered third-party service. The mapping exercise often reveals more AI components than teams expect, especially in products that have grown over time.

Step 2: Determine your role for each system

For each AI system, determine whether you’re a provider, a deployer, or both. The questions to ask: Did we build this, or are we using a third party’s system as-is? Have we built a custom interface, fine-tuned the model, or integrated it under our brand? If yes, you’re almost certainly a provider.

Step 3: Classify risk level

For each system where you’re a provider, determine whether it falls into a high-risk category under Annex III. If it does, you need the full provider compliance programme. If it doesn’t, you need to comply with Article 5 (prohibited practices) and Article 50 (transparency) at minimum.

Step 4: Check Article 50 compliance now

If you have chatbots or AI-generated content, your transparency disclosures should already be in place. These apply regardless of risk classification. Add clear disclosure that your product uses AI.

Step 5: Appoint an EU representative if needed

If you’re placing AI systems on the EU market as a provider, start the process of appointing an authorised representative. Finding the right representative and executing the mandate takes time.

Step 6: Plan your documentation

For high-risk systems, you’ll need technical documentation (Annex IV), a risk management file (Article 9), data governance documentation (Article 10), and an EU Declaration of Conformity (Article 47 and Annex V). These take months to prepare properly.

Will the EU actually enforce this against a US startup?

The same question was asked about GDPR in 2018. The answer is now in the data.

Since May 2018, EU authorities have issued €5.65 billion in GDPR fines. Of that total, €4.68 billion — 83% — went to US companies. EU companies, despite operating directly under the regulation, received just 9%.

Cumulative GDPR fines by country of origin, 2018–2024 (€billions). Source: Center for Data Innovation

The AI Act enforcement machinery will follow the same model. Non-EU companies are not protected by distance.

Beyond direct enforcement: if your EU customers are enterprises or regulated companies, they have their own AI Act obligations. Their compliance programmes will include questions about the AI systems they use and their providers’ documentation. If you can’t provide a Declaration of Conformity or answer basic questions about your risk management approach, you’ll lose those deals to competitors who can.

Compliance has become a procurement requirement. Even if you’re not worried about direct enforcement, your EU customers are asking.

This article is part of the ComplyDrive EU AI Act Knowledge Base. ComplyDrive publishes an EU AI Act Compliance Checklist and Sample Documentation — 47 checklist items across 5 phases, with 9 complete example compliance documents. Details at complydrive.ai.